#HUGIN BAYESIAN TRIAL#

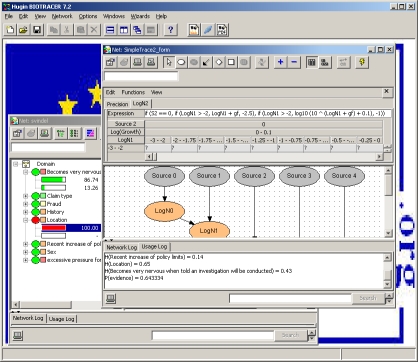

The "Evidence" term used comes from a medical context and represents a ranking system used to describe the strength of the results measured in a clinical trial or research study, though this value could represent some other type of dependency more generally. The "Mean Evidence" metric is the mean value of the set of dependency arcs strengths. If the dependency properties in the ontology have numeric tags specifying the strength of dependency, ("dependsOn3", for example) then some statistical metrics describing the network (nodes and edges) will be computed and displayed above the graph. If dependencies among the selected concepts exist, then the network graph belonging to this set of concepts will be immediately computed and displayed in this panel 1. The checkbox group is also selectable, such that network nodes can be removed and added from the graph without re-navigating the folder tree. Some ontologies are too large to view easily in a folder display, so this list is here to keep track of all the things you have selected so far. Selection of a concept or category of concepts will add it and any subconcepts to the checkbox list just to the right of the folder tree. By holding the 'ctrl' key you can select multiple concepts. With an ontology loaded, you can now select among the various concepts shown in the folder tree. This folder tree structure is an instance of jstree and is therefore interactive and selectable.

#HUGIN BAYESIAN SOFTWARE#

Once an ontology is selected, the software will automatically read the class-subclass hierarchy and recreate it in the main sidebar panel as a folder tree. These ontologies contains object properties that define the dependencies between classes. A simple ontology ("testontology.owl") is available for experimenting with software features. If you upload an ontology it will appear in the selectable list of available ontologies. Here, you can select a preloaded ontology or load one of your own. The main sidebar panel has options to do both. In order to use this tool, you must select or upload an ontology. The interface is also interactive, thus, changes in concept selection, dependency level, and other parameters result in real-time updating of the graphics and other reactive features. This eases the development of Bayesian networks in that the user need not reconstruct a network from scratch for every use case, nor search the literature or interview domain experts to establish an appropriate network structure. When the user selects a set of concepts of interest, the tool will automatically create the network nodes and arcs in a graph object and display the structure of the network, among other things (described in more detail below). The domain ontology specifies what concepts are dependent on others, so it is not neccessary to have a priori knowledge of what they are.

A small introduction to Bayesian networks, based on the same toy model (complicated by the possibility of incorrect testimonies) and implemented using Hugin software, is also provided, to stress the importance of formal, computer aided probabilistic reasoning.The primary use of this software is to semi-automate the construction of dependency networks (Bayesian networks) from a domain ontology knowledge base. Anyway, besides introductory/recreational aspects, the paper touches important questions, like: role and evaluation of priors subjective evaluation of Bayes factors role and limits of intuition `weights of evidence' and `intensities of beliefs' (following Peirce) and `judgments leaning' (here introduced), including their uncertainties and combinations role of relative frequencies to assess and express beliefs pitfalls due to `standard' statistical education weight of evidences mediated by testimonies. Instead, such a criticism could have a `negative reaction' to the article itself and to the use of Bayesian reasoning in courts, as well as in all other places in which probabilities need to be assessed and decisions need to be made. In this light I show that, contrary to what claimed in that article, there was no "probabilistic pitfall" in the Columbo's episode pointed as example of "bad mathematics" yielding "rough justice". In particular, I emphasize the often neglected point that degrees of beliefs are updated not by `bare facts' alone, but by all available information pertaining to them, including how they have been acquired. Triggered by a recent interesting New Scientist article on the too frequent incorrect use of probabilistic evidence in courts, I introduce the basic concepts of probabilistic inference with a toy model, and discuss several important issues that need to be understood in order to extend the basic reasoning to real life cases.